Why We Did This

Our Consul cluster had become a silent tax on the infrastructure. It wasn’t failing — it was there, quietly consuming resources, requiring its own monitoring, its own disaster recovery procedures, and constant vigilance from the team. Every new engineer had to understand Consul, every deployment had to account for it, and every incident’s first question was “Is Consul up?”

When we looked at what Mimir actually needed, the answer was simpler than the system we were running. Mimir’s ring — the distributed ledger that tells every component where everything else is — doesn’t need strong consistency. It needs eventual consistency. It needs to self-heal when the network hiccups. It needs to survive the chaos of Kubernetes without a separate orchestration layer.

That’s when memberlist caught our attention.

The Case for Memberlist

| What Matters | Memberlist | Consul |

|---|---|---|

| Operational Overhead | Embedded in every pod | Separate cluster to manage |

| Consistency Model | Eventual (~5-10s via gossip) | Strong (immediate via CAS) |

| Network Resilience | Survives partitions gracefully | Quorum loss = cluster halt |

| Best For | Kubernetes (everything modern, really) | Existing Consul environments |

The key insight: Mimir’s write path already tolerates ~10 seconds of inconsistency. It retries writes to unreachable nodes. What it doesn’t tolerate is blocking on a central service. Moving the ring to gossip-based memberlist meant we could delete an entire system and actually improve reliability.

Before You Flip Any Switches

There are a few things you need to verify first, and we learned about one of them the hard way.

The Prometheus Port Gotcha — This one bit us. If your pod annotations say prometheus.io/port: "http-metrics" (a named port), Prometheus scrape configs will fall back to the first open port they find. In Mimir’s case, that’s the gossip port (7946), and the logs fill with "unknown message type G" errors. Fix this to prometheus.io/port: "8080" (numeric string) before you start. We learned this lesson in the first 5 minutes.

Beyond that:

- Confirm

memberlist.join_membersis pointed at your gossip-ring service (default:mimir-gossip-ring.<namespace>.svc.cluster.local:7946) - Port 7946 (TCP and UDP) is open between all Mimir instances

- Your current Consul/etcd cluster is healthy

- You have access to edit runtime configuration without restarting pods

The Three-Phase Approach (And Why Each One Matters)

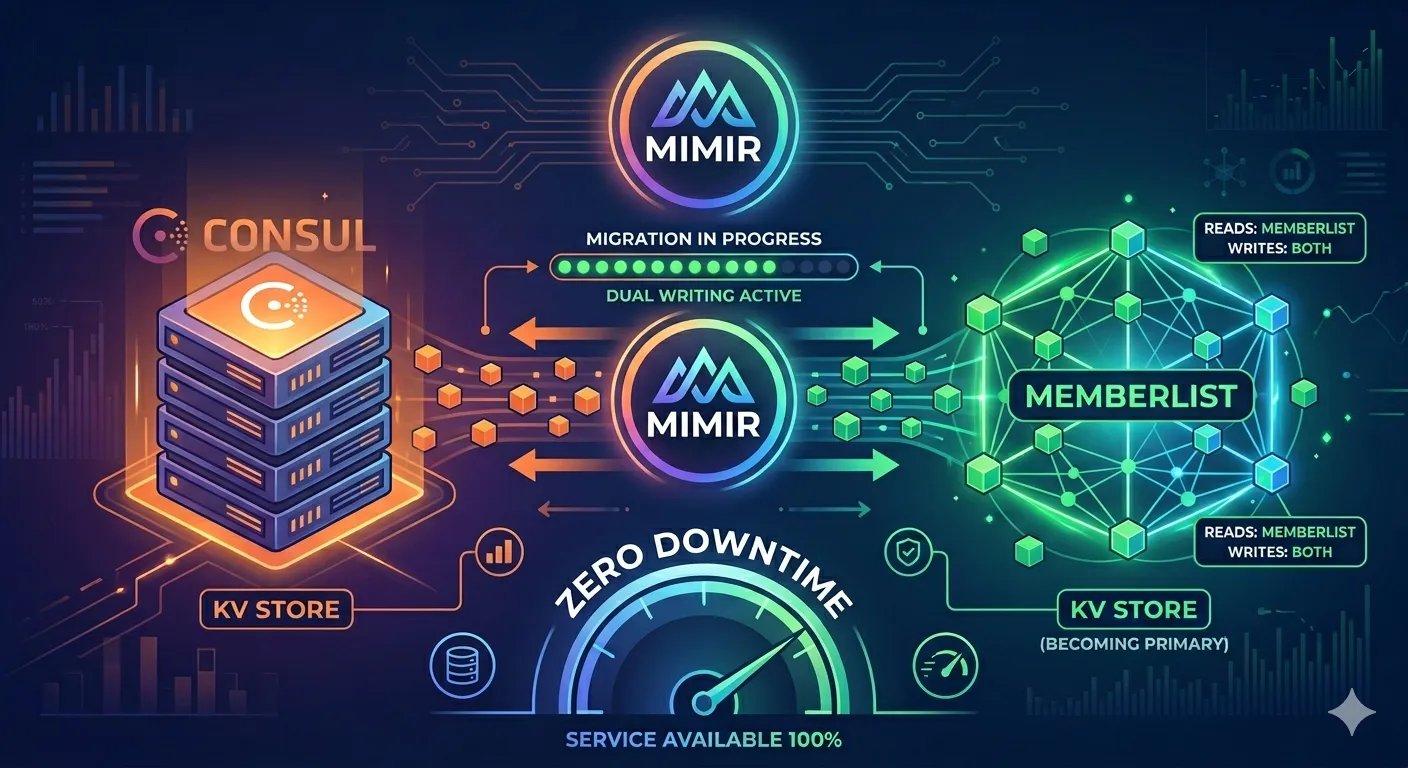

We didn’t migrate in one jump. We couldn’t — the ring would go empty, ingestion would fail, queries would die. Instead, we built a bridge using Mimir’s Multi KV feature, which lets the system write to two backends simultaneously.

Phase 1: [Consul PRIMARY] ←→ [Memberlist SECONDARY mirror]

↓ (hot-reload, zero restart)

Phase 2: [Memberlist PRIMARY] ←→ [Consul SECONDARY, no mirror]

↓ (rolling restart)

Phase 3: [Memberlist ONLY] (Consul deleted)This incremental approach meant we could roll back at any point, and more importantly, it gave memberlist time to shadow the primary system and catch up. Trying to go straight to memberlist would be like switching a car’s engine while driving — possible in theory, catastrophic in practice.

Phase 1: Teaching Both Systems to Listen

The first move was configuration. We told Mimir: write to Consul as always, but also mirror everything to memberlist. Memberlist had no decisions to make yet — it just collected data.

We also set a cluster label (mimir-prod-us-east-1) to prevent a subtle-but-catastrophic failure mode: if multiple gossip clusters (Mimir, Loki, Prometheus) happen to run on the same IP range, their traffic could merge the rings. The cluster label is memberlist’s safety fence.

mimir:

structuredConfig:

memberlist:

cluster_label_verification_disabled: true # Allow rollout without enforcement yet

cluster_label: "mimir-prod-us-east-1" # Unique ID for this cluster

ingester:

ring:

kvstore: &kvstore

store: multi

multi:

primary: consul # Still in charge

secondary: memberlist # Collecting copies

mirror_enabled: true

distributor:

ring:

kvstore: *kvstore

compactor:

sharding_ring:

kvstore: *kvstore

store_gateway:

sharding_ring:

kvstore: *kvstore

alertmanager:

sharding_ring:

kvstore: *kvstore

ruler:

ring:

kvstore: *kvstoreWe deployed, and the system kept running. Memberlist started building a full copy of every ring.

What looked scary but wasn’t: In the first 10-15 minutes, secondary write errors appeared in the logs. Secondary writes failed because the gossip cluster was still converging. But Consul (the primary) was serving all reads and writes perfectly. This is by design — if the secondary ever fails completely, the system keeps working.

What we learned: Watching the right metrics matters. The metric rate(cortex_multikv_mirror_writes_total[5m]) told us memberlist was getting written to. The errors were expected noise.

We waited for the gossip ring to fully form, but we didn’t just watch the clock. Before flipping memberlist to primary, we checked three metrics:

cortex_multikv_mirror_enabled— should be1. If it drops, mirroring has stopped and Phase 2 becomes unsafe.cortex_multikv_primary_store— should showconsulat this stage. A different value means something has changed unexpectedly.cortex_multikv_mirror_write_errors_total— watch the rate. Errors are expected in the first 10-15 minutes while gossip converges, but this should settle to zero.

Hard gate: You must see a non-zero rate of successful writes to the secondary store before proceeding. This confirms memberlist is actively receiving ring updates — not just configured to. If this rate is zero, memberlist’s ring is empty and switching it to primary will cause an outage.

Only once all three metrics were healthy did we move to Phase 2.

Phase 2: The Painless Flip

Phase 2 was the elegant part. Instead of redeploying and restarting pods, we just updated runtimeConfig in the ConfigMap:

runtimeConfig:

multi_kv_config:

primary: memberlist # Flip reads+writes

mirror_enabled: false # Stop writing to ConsulThat’s it. Mimir polls runtimeConfig every ~10 seconds. Within 30 seconds of this change, all six ring types had switched their primary to memberlist. No restarts. Zero downtime.

The contrast we discovered: Changing structuredConfig (which we used in Phase 1) triggers a pod rollout. Changing runtimeConfig is just a ConfigMap update that Mimir picks up instantly. We made this mistake in our planning — trying to use structuredConfig for Phase 2 — and caught ourselves before applying it. That’s the kind of detail that costs hours in debugging if you get it backwards.

After the flip, we monitored for 15 minutes. Ring convergence stayed healthy. No errors. Consul became secondary (and essentially unused). We were ready to cut it loose.

Phase 3: Cutting the Cord (After a Week of Monitoring)

Before we could take the irreversible step of deleting Consul, we had to prove memberlist could handle whatever our workload threw at it. We gave ourselves a full week of monitoring across all load scenarios — peak traffic hours, maintenance windows, spike patterns, the works.

Why a week? Because one day of good health doesn’t prove anything. Memberlist gossip operates on timescales that need days to validate — convergence under load, behavior during node churn, resilience when your deployment pipeline spins up and tears down pods. We watched:

- Peak traffic hours: Did ring convergence stay sub-30 seconds? Did gossip bandwidth stay reasonable?

- Maintenance windows: Did cluster rebuilds happen cleanly when pods were replaced?

- Spike patterns: If traffic suddenly tripled, did memberlist handle the gossip chatter?

- Overnight stability: Were there any error patterns that only appear under low traffic?

Only after a week of all-clear metrics did we proceed to the irreversible step: removing the Multi KV configuration entirely and deleting the Consul cluster.

In structuredConfig, every ring switched from multi to simple memberlist:

mimir:

structuredConfig:

memberlist:

cluster_label: "mimir-prod-us-east-1" # Keep it; enforcement re-enabled

ingester:

ring:

kvstore: &kvstore

store: memberlist # No multi, just memberlist

# ... repeat for distributor, compactor, store_gateway, alertmanager, rulerWith confidence earned from a week of stable operation, we removed multi_kv_config from runtimeConfig entirely, redeployed (rolling restart), and then deleted the Consul pods.

The cluster label verification, which we had disabled in Phase 1, re-activated. From that point on, memberlist would only accept gossip traffic from nodes with matching labels — our safety fence was active. By the time this happened, we knew memberlist was ready.

What Went Wrong (And What Saved Us)

The Prometheus Port Saga

We spent the first hour wondering why our logs were full of "unknown message type G" errors from IPs we didn’t recognize. Turns out, they were Prometheus scrape requests hitting the gossip port. The root cause was our pod annotations using a named port instead of a numeric string. It’s the kind of gotcha that’s trivial once you know about it, invisible before you do.

Secondary Write Errors That Looked Scarier Than They Were

In Phase 1, we panicked when we saw "error writing to secondary KV store" in the logs. We thought the migration was failing. In reality, the memberlist gossip cluster wasn’t converged yet — secondary writes were racing the clock. These errors stopped on their own. We learned to trust our metrics and wait instead of reaching for the rollback button.

The Config Precedence Trap

This was our biggest planning mistake. runtimeConfig (hot-reload, every ~10 seconds) overrides structuredConfig (pod restart). We almost changed structuredConfig for Phase 2 before realizing it would trigger unnecessary restarts. The lesson: if you can hot-reload, do it. If you must restart, own that choice explicitly.

Was It Worth It?

Yes. Consul is gone. We have one fewer system to monitor, patch, and understand. Memberlist self-heals — when a node recovers from a network hiccup, the ring re-converges without intervention.

The bigger lesson was about incremental rollouts. We could have tried a direct cutover (risky), or a long-lived dual-write phase (wasting resources). Instead, the three-phase approach let us test each step, roll back if needed, and gain confidence. By the time we deleted Consul, we were certain.

If you’re running Mimir on Kubernetes, migrate to memberlist. Consul isn’t bad — it’s just unnecessary overhead. The ring doesn’t need a database. It needs a gossip network, and that’s exactly what memberlist is.